Building a brighter way for capturing cancer during surgery

University of Texas at Dallas researchers have demonstrated that imaging technology used to map the universe shows promise for more accurately and quickly identifying cancer cells in the operating room.

In a study published in the Sept. 14 edition of the journal Cancers, Dr. Baowei Fei and colleagues showed that hyperspectral imaging and artificial intelligence could predict the presence of cancer cells with 80% to 90% accuracy in 293 tissue specimens from 102 head and neck cancer surgery patients.

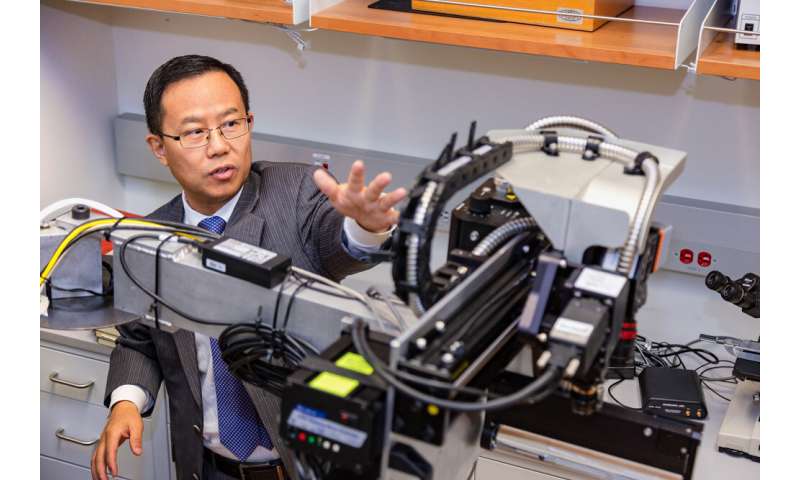

Fei, professor of bioengineering and the Cecil H. and Ida Green Chair in Systems Biology Science in the Erik Jonsson School of Engineering and Computer Science, recently received a $1.6 million grant from the Cancer Prevention & Research Institute of Texas (CPRIT) to further develop the technology, called a smart surgical microscope.

Once developed, the technique would need to be tested in clinical studies before it could be used in operating rooms.

“We hope that this technology can help surgeons better detect cancer during surgery, reduce operating time, lower medical costs and save lives,” Fei said. “Hyperspectral imaging is noninvasive, portable and does not require radiation or a contrast agent.”

From Satellites to Medicine

Currently, pathologists analyze tissue samples from a patient who is undergoing cancer surgery and still under anesthesia in a process called intraoperative frozen section analysis. Several resections may be needed during a procedure as surgeons try to reach tissue with clear, or noncancerous, margins. In some cases, cancer cells cannot be sampled or detected during surgery, resulting in additional surgery.

Hyperspectral imaging, originally used in satellite imagery, orbiting telescopes and other applications, goes beyond what the human eye can see as cells are examined under ultraviolet and near-infrared lights at micrometer resolution. By analyzing how cells reflect and absorb light across the electromagnetic spectrum, experts can get a spectral image of cells that is as unique as a fingerprint.

Fei said the CPRIT grant will support his research group’s ongoing efforts to “train” the microscope to recognize cancer with the help of images of cancerous and noncancerous cells in an extensive database.

“If we have a large database that knows what is normal tissue and what is cancerous tissue, then we can train our system to learn the features of the spectra,” Fei said. “Once it’s trained, the smart device can predict whether a new sample is a cancerous tissue or not. That’s how machine learning can help with a cancer diagnosis.”

Fei said the technology should be able to provide nearly instant results, which could significantly reduce surgery time and cost. Each frozen resection evaluation under current procedures can take 30 to 45 minutes. According to an analysis in the April 18, 2018, issue of JAMA Surgery, the average cost of the operating room is estimated to be $36 per minute, so each 30-minute frozen section analysis adds more than $1,000 to the cost of surgery. In many cases, multiple evaluations are needed, which further prolongs the surgical time and increases the costs.

Less time in the operating room also could decrease risks for patients because they would not need to spend as much time under anesthesia.

Cancer Research at UTD

CPRIT has awarded $2.4 billion in grants to Texas research institutions and organizations since the institute was formed in 2008. Including Fei’s new award, 11 UT Dallas researchers have received 17 CPRIT grants totaling more than $14.6 million.

“We hope that this technology can help surgeons better detect cancer during surgery, reduce operating time, lower medical costs and save lives.”

Dr. Baowei Fei, the Cecil H. and Ida Green Chair in Systems Biology Science in the Erik Jonsson School of Engineering and Computer Science

Recent grants to UT Dallas have supported the establishment of a small-animal imaging facility and separate research to develop a noninvasive imaging technique to detect glioblastoma, an aggressive form of brain cancer.

Source: Read Full Article